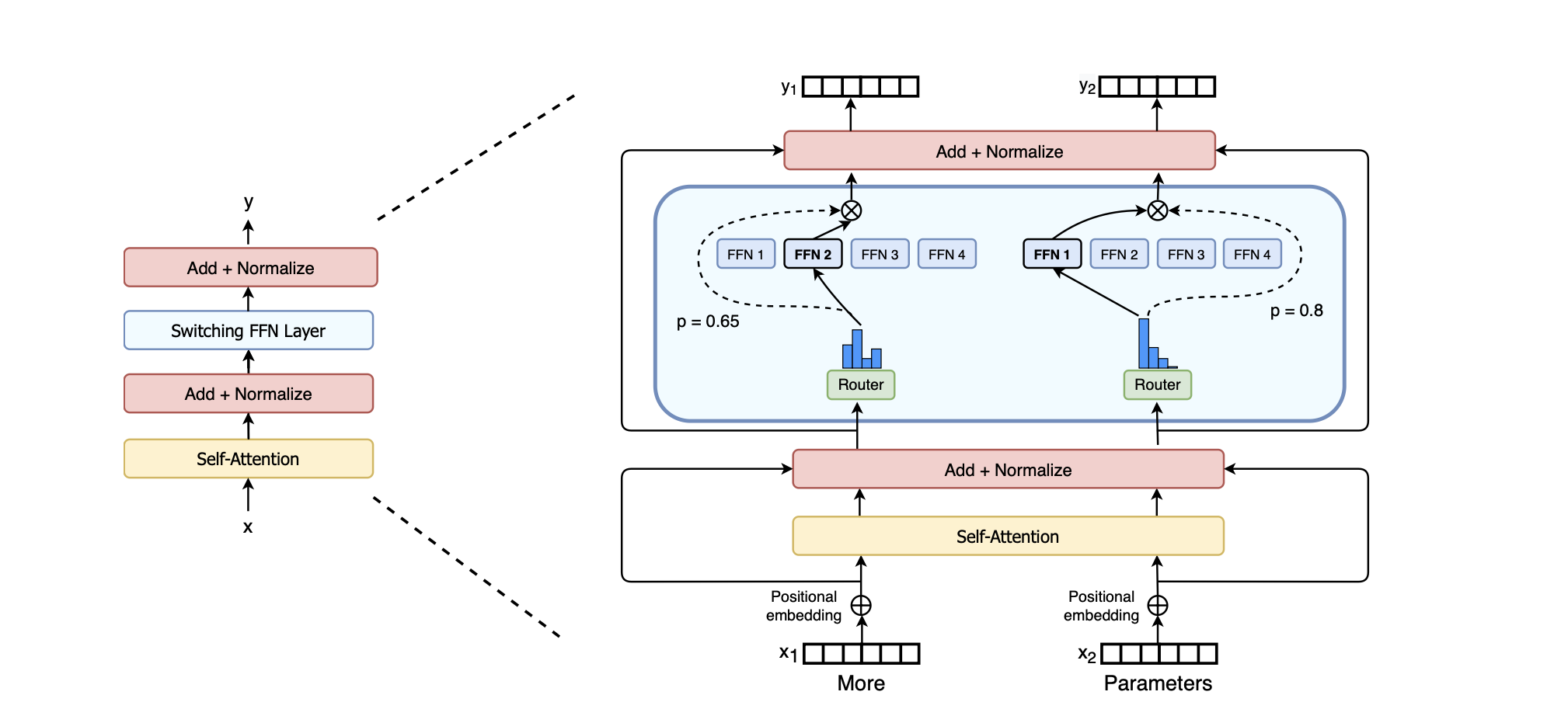

Switch Transformers: Scaling to Trillion Parameter Models with Simple and Efficient Sparsity

[Read More]

Eyeriss: An Energy-Efficient Reconfigurable Accelerator for Deep Convolutional Neural Networks

Paper Review

Introduction

[Read More]

The Art of Defending

A Systematic Evaluation and Analysis of LLM Defense Strategies on Safety and Over-Defensiveness

The paper presents the Safety and Over-Defensiveness Evaluation (SODE) benchmark, which assesses Large Language Models’ (LLMs) defense strategies against safety concerns. It systematically evaluates and compares various strategies, revealing key findings like the trade-off between safety improvement and over-defensiveness in self-checking techniques, and the effectiveness of safety instructions in reducing...

[Read More]

Contributing to the Bhashini Initiative

Govt. of India (MEITY) National Language Translation Mission

About Bhashini

[Read More]

LiqVid <> SNU: Two Stage Automated Essay Scoring (AES)

Automated Essay Scoring (AES) project - LiqVid<>SNU collaboration.

Experiments

Python Notebooks

[Read More]